Out-of-Place Key-Value Stores

Modern data systems are required to process an enormous

amount of data generated by a variety of applications.

To support this requirement, modern data stores reduce

read/write interference by employing the out-of-place

paradigm. The classical out-of-place design is the

log-structured merge (LSM) tree. LSM-trees write the

incoming key-value entries in an in-memory buffer to

ensure high write throughput, and uses in-memory

auxiliary data structures (such as Bloom filters

and fence pointers) to provide competitive read

performance. While LSMs are great for writes and

reads in general, we show that all state-of-the-art

LSM-based data stores perform suboptimally in presence

deletes in a workload.

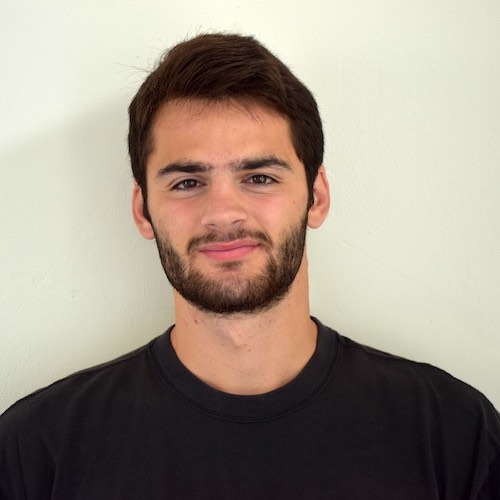

Deletes in LSM-trees

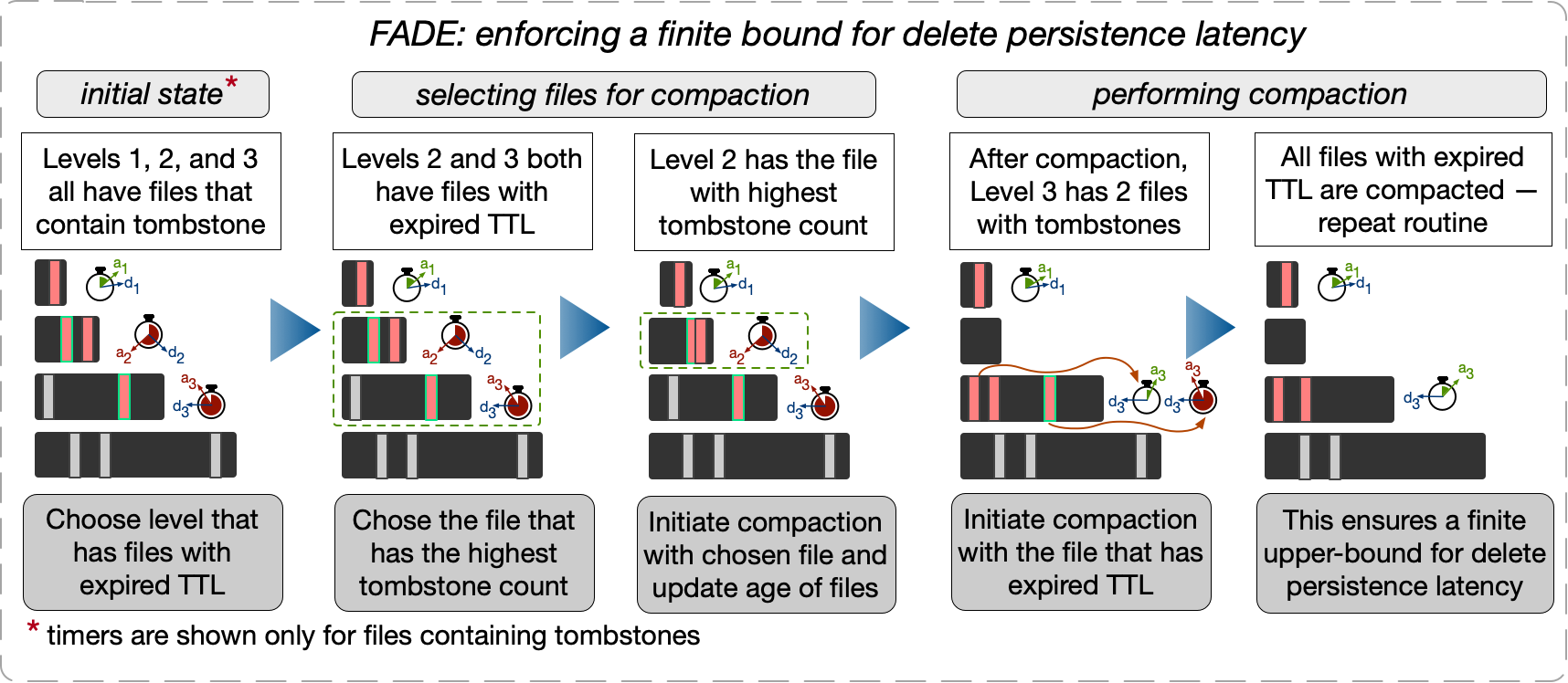

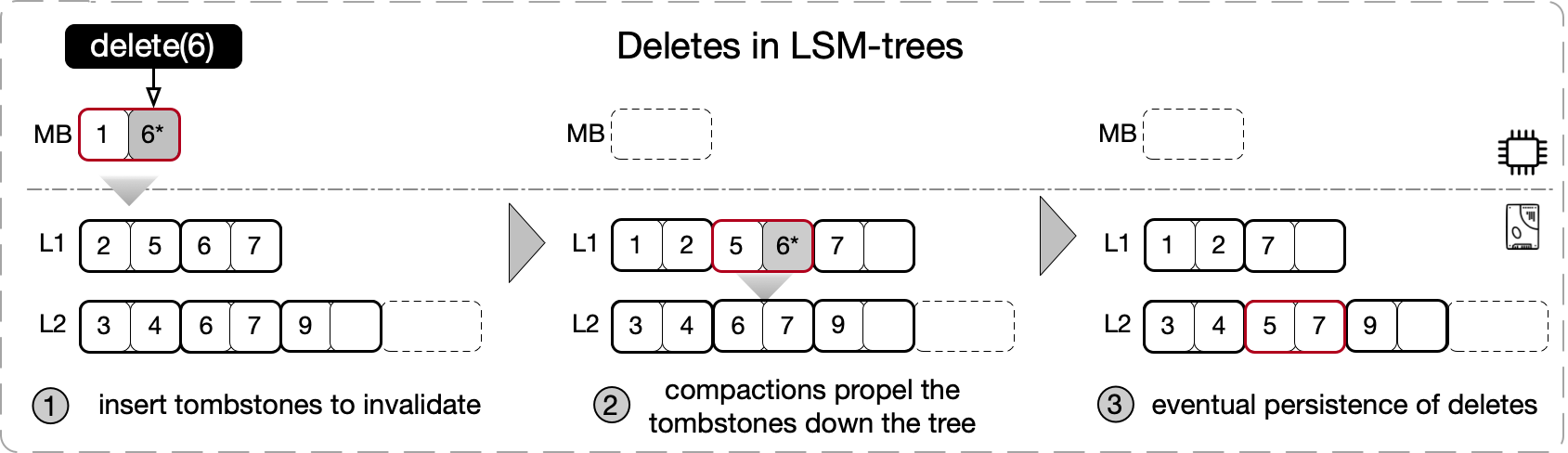

Deletes in LSM-trees are realized logically by inserting a special type of key-value entry, known as a tombstone. Once inserted, a tombstone logically invalidates all entries in a tree that has a matching key, without necessarily disturbing the physical target data entries. The target entries are persistently deleted from the data store only after the corresponding tombstone reaches the last level of the tree through compactions. The time taken to persistently delete the target entries depends on (i) the file picking policy followed by the compaction routine, (ii) the rate of ingestion of entries to the database, (iii) the size ratio of the LSM-tree, and (iv) the number of levels in the tree.

The figure above shows how tombstone-driven logical deletes eventually becomes persistent using an example where we want to delete all entries with key 6 from an LSM-based data store. In Step 1, a tombstone for 6, denoted by 6*, is inserted into the tree. Step 2, shows how the file containing the tombstone 6* moves to the next level of the tree due to (partial) compaction. Finally, Step 3 shows how all entries with a matching key are eventually persistently removed from the LSM-tree after the tombstone reaches the last level of the tree.

practical workloads. Modern LSM-based

data stores are unable to realize secondary range deletes

efficiently, as the entries qualifying for such an operation

may be scattered all across the tree. The way commercial

data stores realize secondary range delete operations is by

periodically (typically, every 7, 15, or 30 days)

compacting the whole tree to a single base level. Such full

tree compactions are highly undesirable as they require

many superfluous I/Os to the disk and may also

lead to prolonged write stalls and latency spikes.

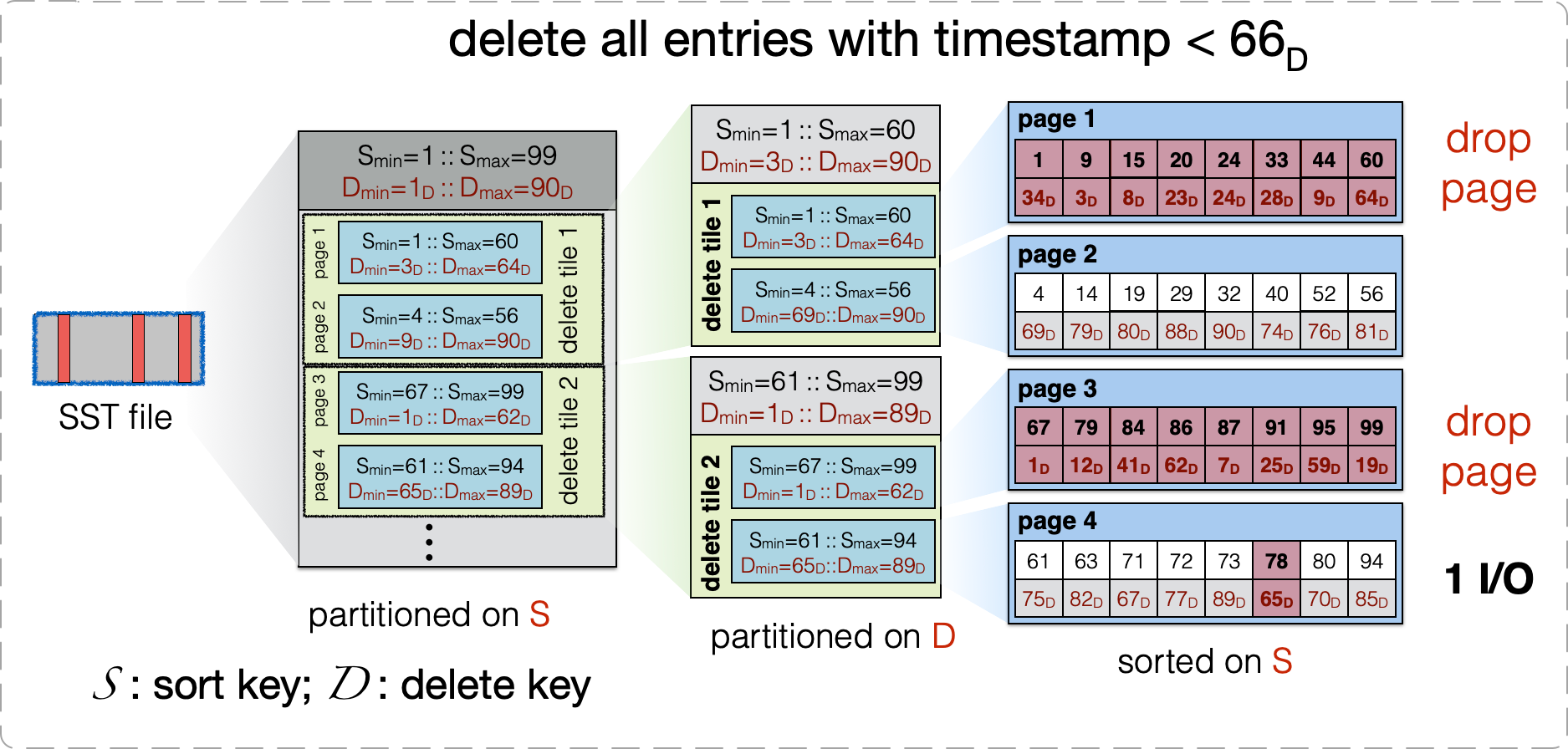

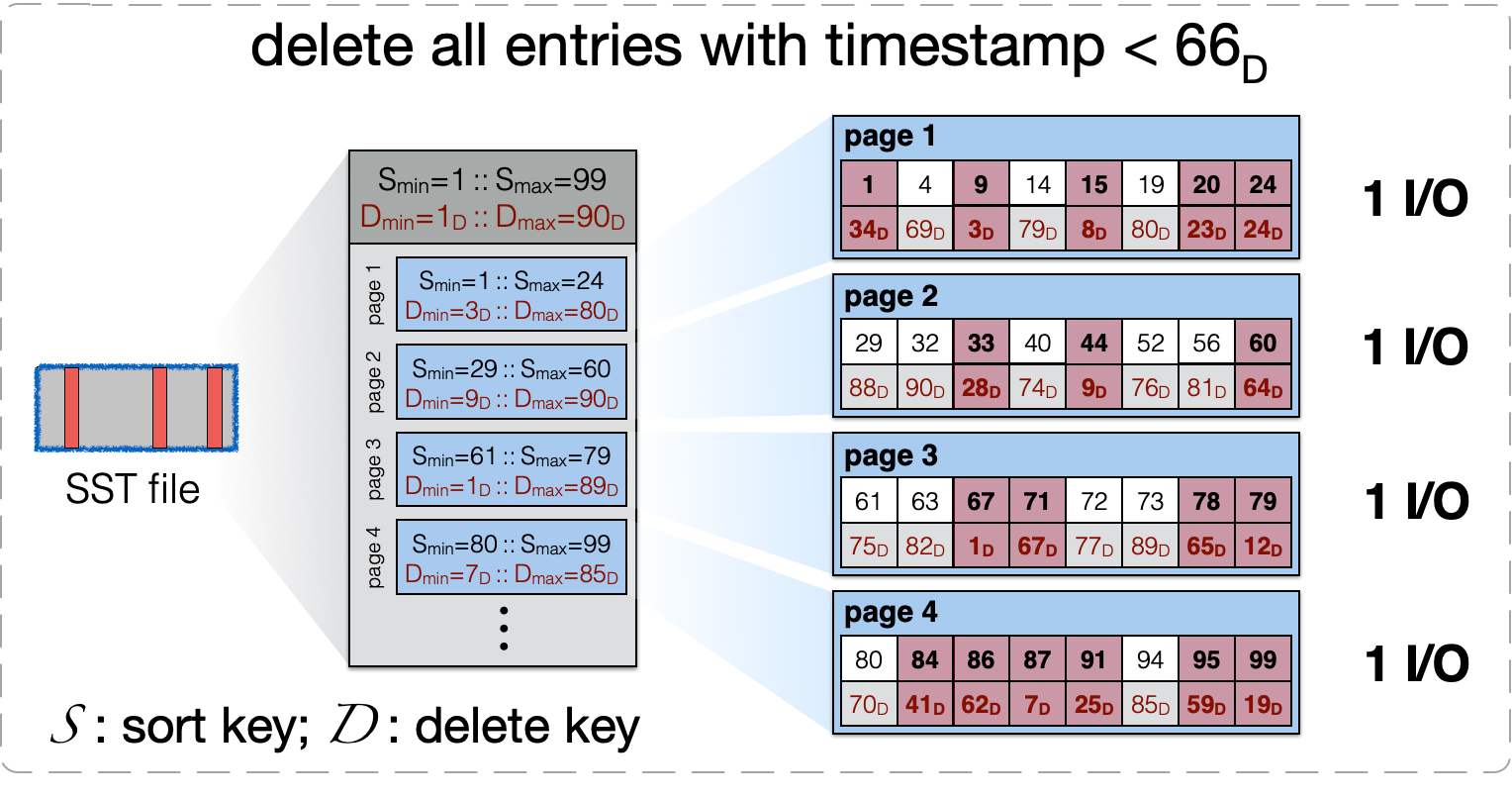

In the example here, we use S to denote the sort key and D for

the delete key, and we observe that to realize a secondary

range delete operation that deletes all entries with a

timestamp smaller than 66, all four pages shown, must be read to

memory.

practical workloads. Modern LSM-based

data stores are unable to realize secondary range deletes

efficiently, as the entries qualifying for such an operation

may be scattered all across the tree. The way commercial

data stores realize secondary range delete operations is by

periodically (typically, every 7, 15, or 30 days)

compacting the whole tree to a single base level. Such full

tree compactions are highly undesirable as they require

many superfluous I/Os to the disk and may also

lead to prolonged write stalls and latency spikes.

In the example here, we use S to denote the sort key and D for

the delete key, and we observe that to realize a secondary

range delete operation that deletes all entries with a

timestamp smaller than 66, all four pages shown, must be read to

memory.